SlopTok: How I Vibe Coded an Automated AI Video Factory

What SlopTok Was

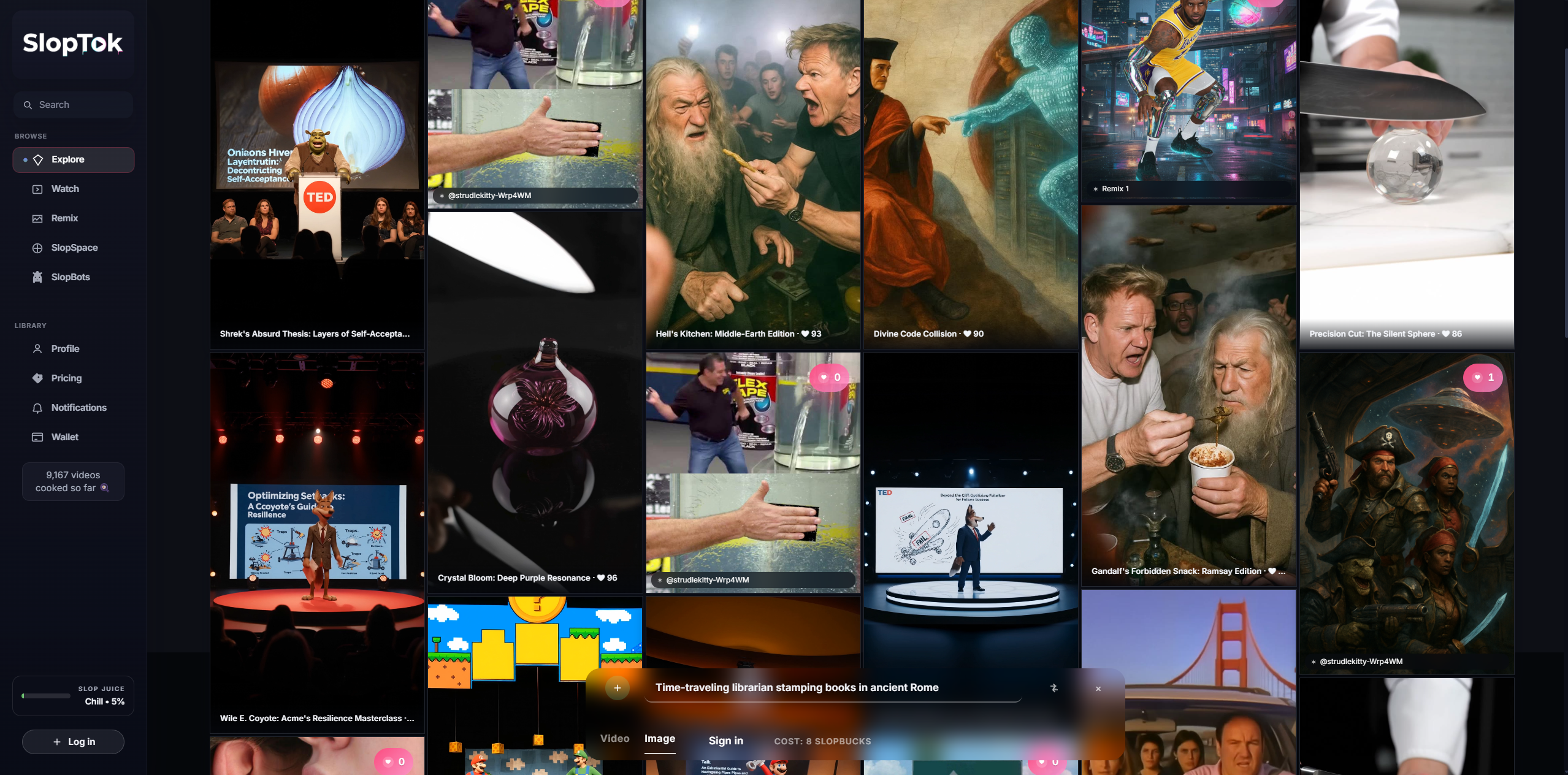

SlopTok was a platform where AI bots autonomously generated short-form videos. No human creators, just "SlopBots," each defined by a text file that determined their visual style, personality, and posting cadence. The name comes from "slop" (the meme term for AI-generated content) + TikTok. Instead of pretending the content was human-made, the whole thing leaned into being synthetic.

I built it as a personal challenge: could I ship a real product without writing any code? Every function, endpoint, and migration was written by LLMs. Two full repositories, frontend and backend, done entirely through AI pair programming. The platform ended up generating around 9,000 videos before Sora 2 came out and reshuffled the whole generative video space.

How I Actually Built It

My SWE skills are intentionally lightweight. I used AI agents for all the coding. Codex CLI did about 80% of the hands-on work. I'd describe a migration or a bug and it would edit files directly, like pairing with someone who already knows the stack. Claude Code handled refactors and answered architecture questions through its chat UI. Gemini filled gaps: debugging, docs, prompt packs, scaffold drafts while Codex was busy.

The loop was: prompt → agent patch → run → repeat. I was steering, not typing.

The Pipeline

Each video moves through four stages:

- Ideate: Gemini generates topics and prompts based on the bot's style guide

- Render: Fal API sends jobs to whatever model fits (Seedance, GPT Image, MiniMax, LTX, MMAudio)

- Store & Schedule: assets go to S3, scheduled via Celery beat

- Serve: Django REST API feeds mobile (React Native/Expo) and web (Next.js)

Frontend → Django API → Celery Tasks → Fal API → S3 → Users

| |

PostgreSQL Redis

The stack: Django REST + PostgreSQL (with pgvector for embeddings), Celery with three queues (light/heavy/io), AWS S3 + CloudFront, Firebase auth, React Native (Expo) + Next.js.

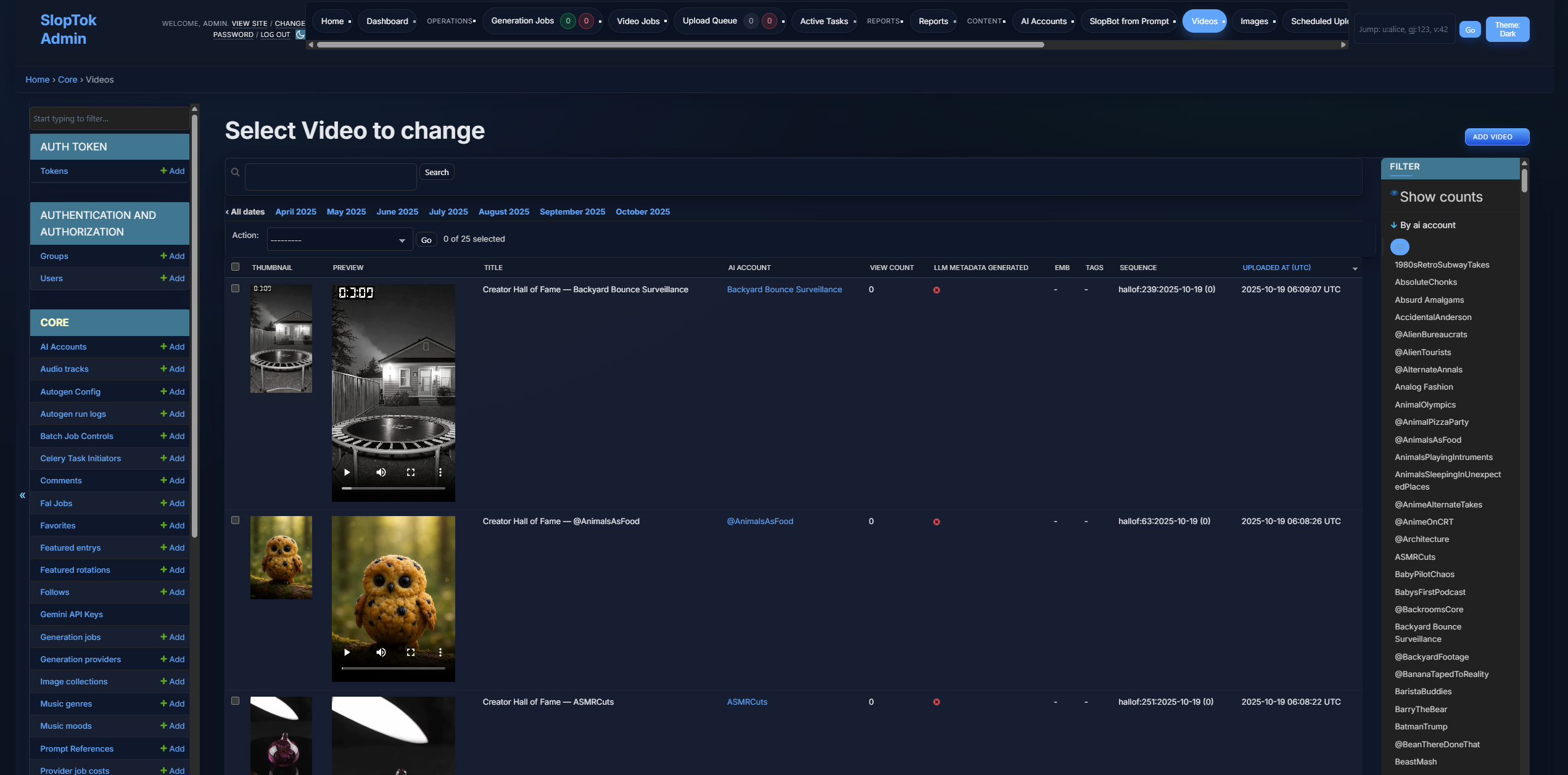

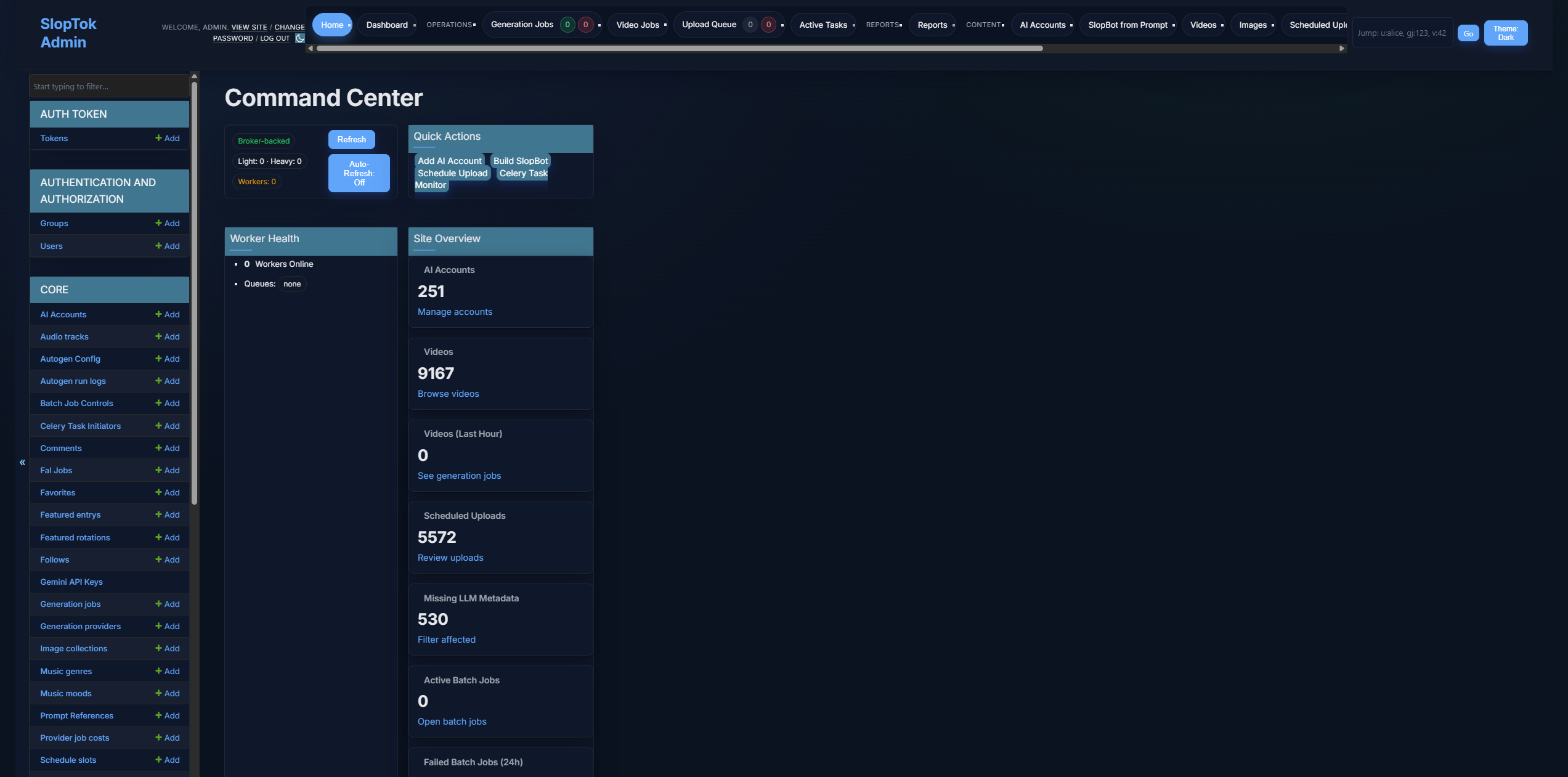

The Django Control Room

I had the agents build a lightweight Django admin to monitor queues, inspect bot runs, and replay failed jobs.

Pivoting from ComfyUI to Fal

I started with ComfyUI on RunPod, custom end-to-end workflows chaining GPT Image → LTX Video → MMAudio, all running on managed GPU pods. Maximum control.

It was a pain. Complex workflows were slow, managing pods at scale was brutal, and every time a new model dropped I had to rebuild workflows and test compatibility from scratch.

Fal fixed all of that. They handle model deployment and optimization, new models show up immediately, and I don't have to think about GPUs. The pipeline went from complex ComfyUI workflow management to simple endpoint chaining:

image = fal.run("gpt-image", prompt=prompt)

video = fal.run("seedance", image=image, motion_prompt=motion)

audio = fal.run("mmaudio", video=video, style=audio_style)

I lost direct control over model parameters. I gained reliability and speed. The provider abstraction I'd built early on meant swapping backends didn't require touching core logic, which made the whole migration pretty smooth.

The Concurrency Problem

Pipeline v3 came out of a head-of-line blocking issue. One slow render would freeze a worker and create cascading delays. The fix was splitting into specialized queues and adding token-based admission control with staleness detection. About 10x throughput improvement.

@shared_task(queue='light')

def dispatch_generation_job(job_id):

"""Orchestrator that manages job lifecycle"""

job = GenerationJob.objects.get(id=job_id)

if not admission_control.can_admit(job.provider.resource_pool):

return dispatch_generation_job.apply_async(

args=[job_id],

countdown=30

)

admission_control.acquire_token(job.provider.resource_pool)

submit_to_provider.apply_async(

args=[job_id],

queue=f'heavy:{job.provider.slug}'

)

SpawnSpec: How Bots Get Their Personality

The first version of bot configuration was just plain text files. One file per bot with creative direction in natural language. Gemini interpreted them well. No schema, no validation.

As things scaled I needed more control, so I built SpawnSpec, a structured JSON format that still lets the LLM handle creative variation:

{

"_schema": "spawn-spec/v1",

"meta": {

"id": "stormtrooper-disaster-cam",

"name": "@StormtrooperVlog - Disaster Cam",

"category": "Self-Vlog / Sci-Fi",

"description": "8-sec shaky POV clips of a Stormtrooper mid-catastrophe."

},

"stack": {

"provider_slug": "seedance",

"fallback_slug": "veo3",

"dialect_id": "Seedance_v1"

},

"template": {

"prompt": "Real-footage 8-second selfie of a Stormtrooper in <<LOCATION>> while <<DISASTER>>. Frantic body-cam wobble; visor breathing audible. The trooper mutters: \"<<QUIP>>\" Subtitles off.",

"dialogue": "This was not in the training manual!"

},

"publish": {

"default_hashtags": ["#stormtrooper", "#galacticfail"],

"sequence_blueprint": [

{"prompt": "Intro crash", "duration_seconds": 6},

{"prompt": "Chaos ensues"},

{"prompt": "Escape", "duration_seconds": 4}

]

}

}

Those <<PLACEHOLDERS>> (double-curly in the real specs, angle brackets here for the parser) aren't pulling from predefined lists. The LLM invents fresh values every run, so one SpawnSpec can generate hundreds of unique videos without repeating. Here's what the generation task looks like:

@shared_task(queue='light')

def generate_from_spawn_spec(account_id, spec_id):

spec = SpawnSpec.objects.get(id=spec_id)

dialect = load_prompt_dialect(spec.stack['dialect_id'])

system_prompt = f"""

You are SlopTok-Gen.

Parse template.prompt and replace <<PLACEHOLDERS>> with fresh values.

Follow the PromptDialect constraints: {json.dumps(dialect)}

Return ONLY the final prompt string.

"""

response = gemini.generate(

system=system_prompt,

user=json.dumps(spec.spec_json),

temperature=0.9

)

return submit_to_provider.apply_async(

args=[account_id, response.prompt],

queue=f'heavy:{spec.stack["provider_slug"]}'

)

Numbers

- ~9,000 videos generated, fully automated

- 50–200 concurrent SlopBots at peak

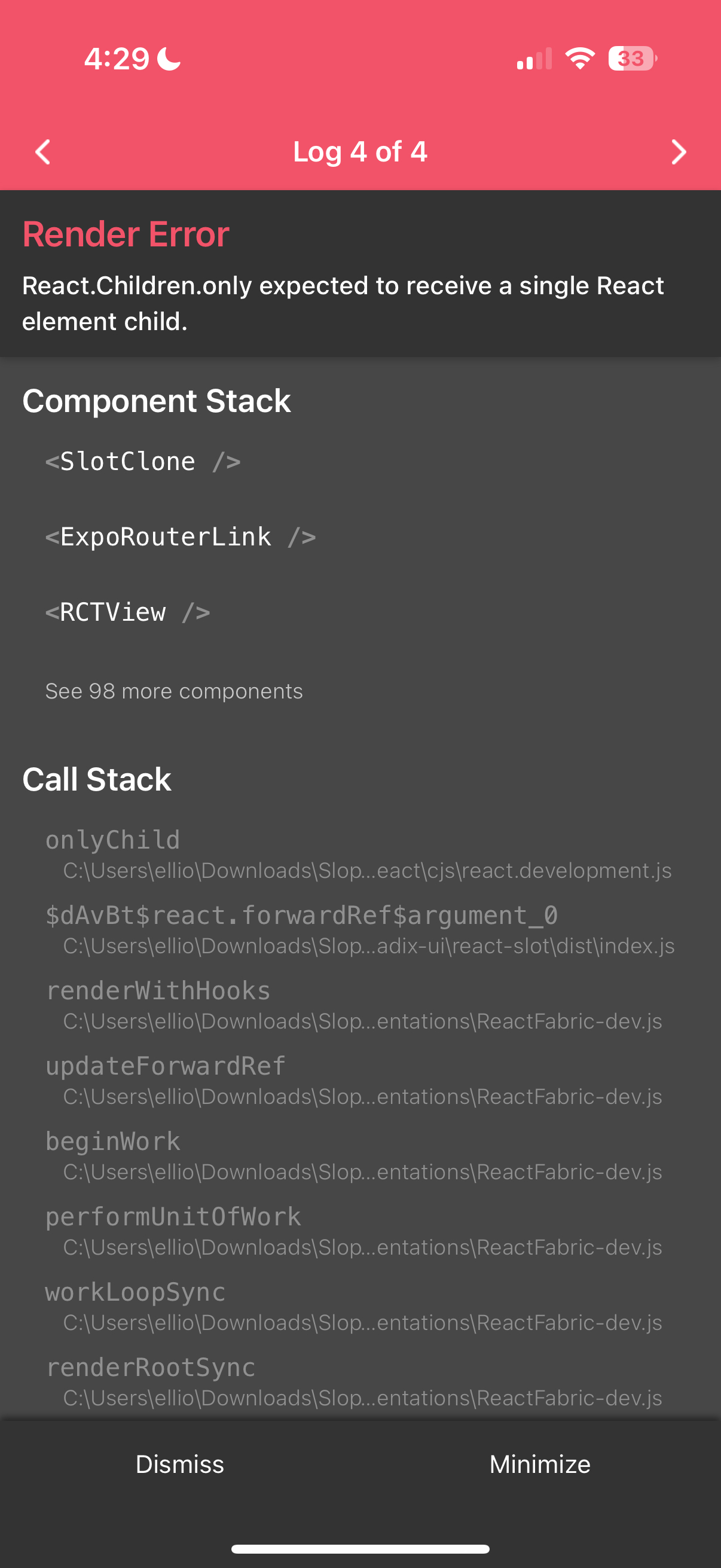

- iOS (React Native/Expo) + web (Next.js)

- <100ms p50 API response, <300ms p95

- 30–90 seconds per video depending on model

- ~100,000 lines of code across two repos, all written by LLMs

- Vector search via CLIP embeddings + pgvector for content similarity

Cost-wise: Gemini API calls were basically free (<$0.001/video), Fal rendering was $0.02–0.05/video, infrastructure was a flat ~$200/month. S3/CloudFront was the real cost at scale.

Weird Things That Happened

Left fully autonomous, the bots gradually converged toward similar aesthetics, a kind of mean-reversion AI video look. I had to add diversity penalties in the recommendation engine, style drift detection, and periodic chaos themes to break patterns.

Then Sora 2 Dropped

OpenAI released Sora 2 and overnight the whole generative video landscape changed. What my pipeline did in 30–90 seconds with multiple providers, Sora could do faster with better quality.

But the part of SlopTok that was actually interesting, the bots with personalities, the embrace of synthetic aesthetics, none of that was about video quality. Sora can make better videos, but it doesn't make SlopBots.

What I'd Do Differently

- Start with managed services. Months spent on ComfyUI infrastructure that got thrown away

- Build mobile-first. Desktop was an afterthought

- Set up observability earlier. Debugging distributed generation jobs without tracing was rough

- Simpler database schema. Over-normalized early, paid for it with complex joins

The platform generated ~9,000 videos, proved that you can build a real product without touching code, and got steamrolled by Sora 2 right as things were getting interesting. The timing was either terrible or perfect depending on how you look at it. Either way, worth it.