Universe Index

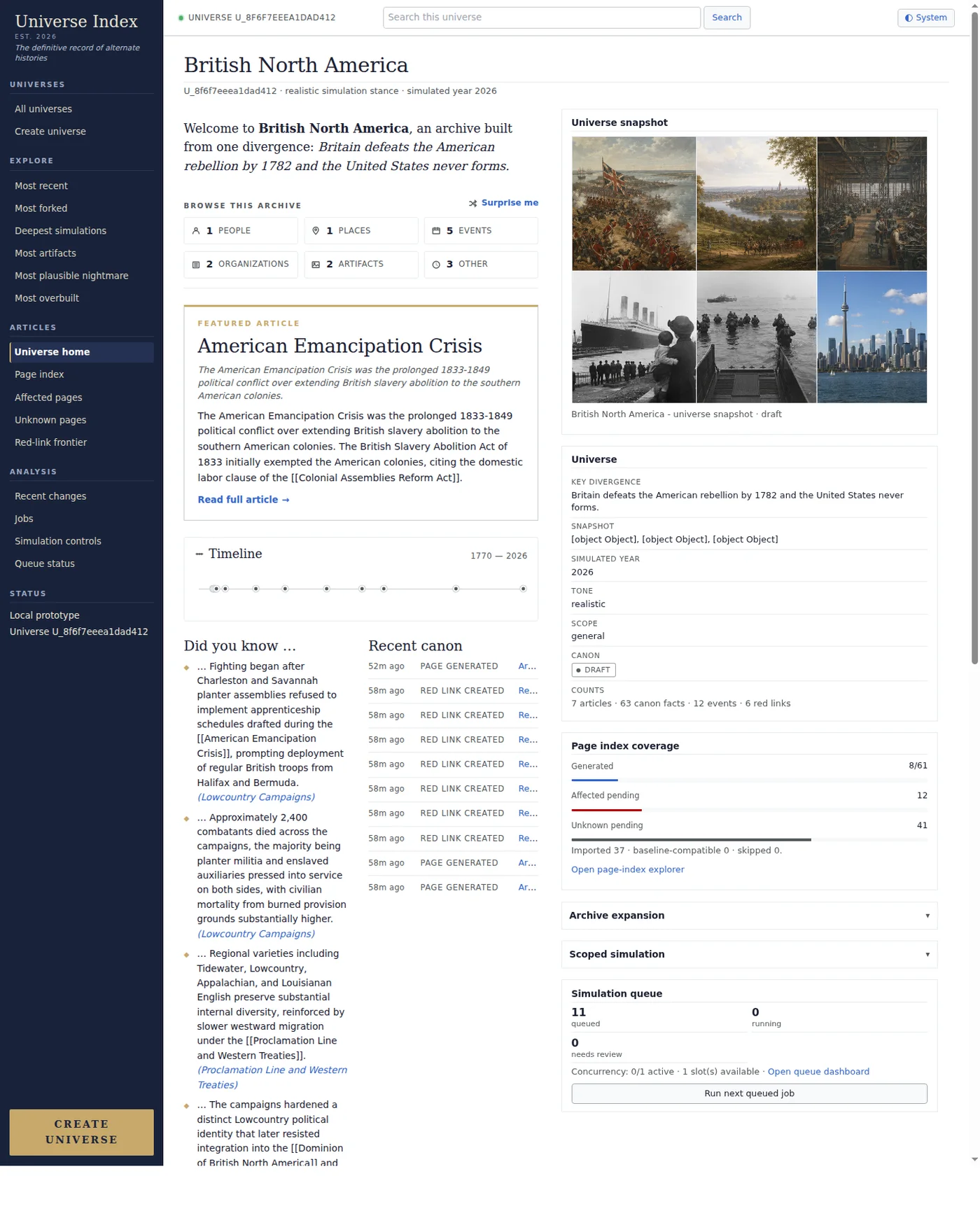

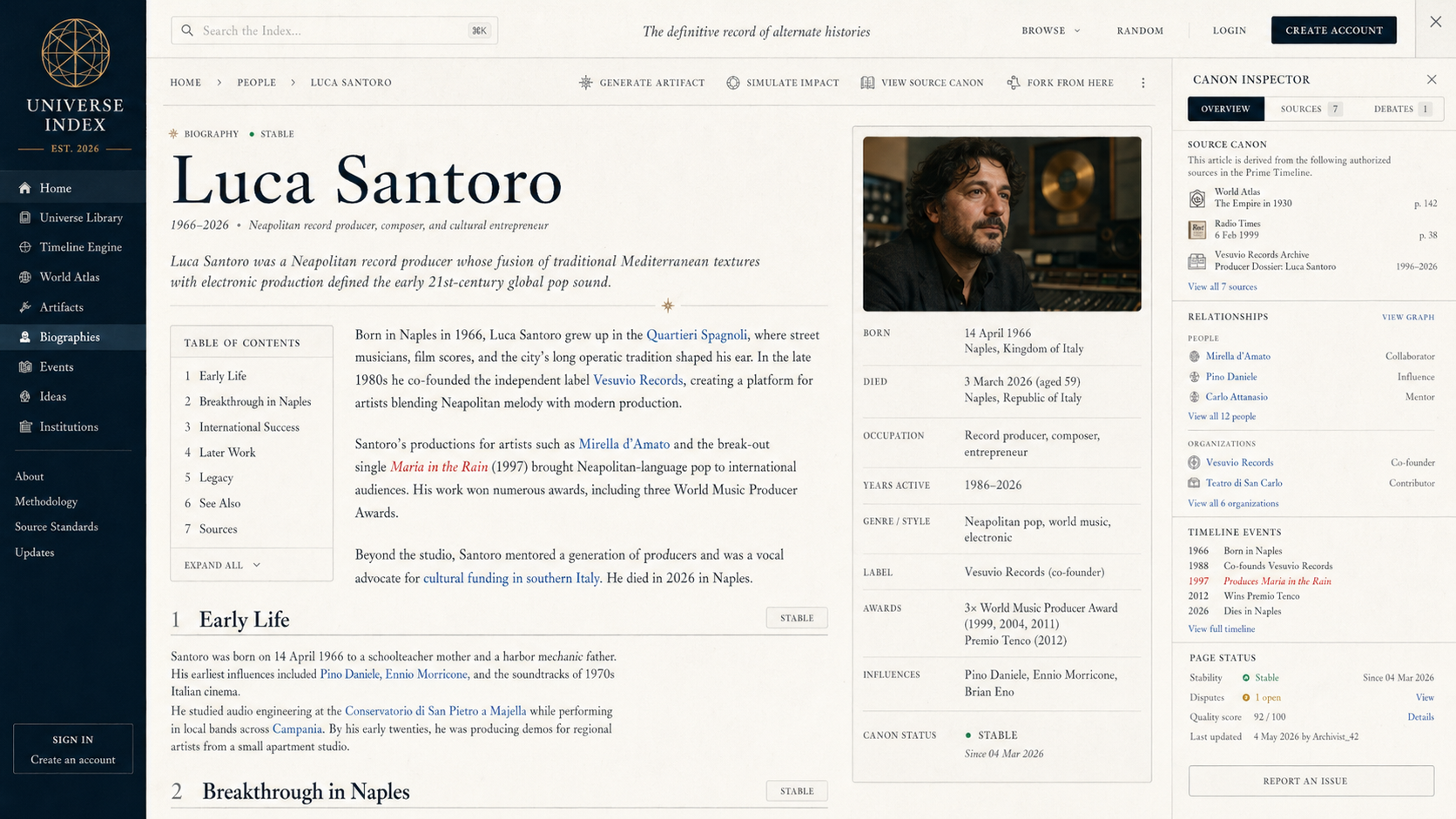

Universe Index is my alternate-history universe simulator: change one fact, then watch the archive grow around it. The app turns a premise into universes, entities, canon facts, timeline events, page indexes, red links, artifacts, revisions, and article pages that feel like they were discovered instead of written on demand.

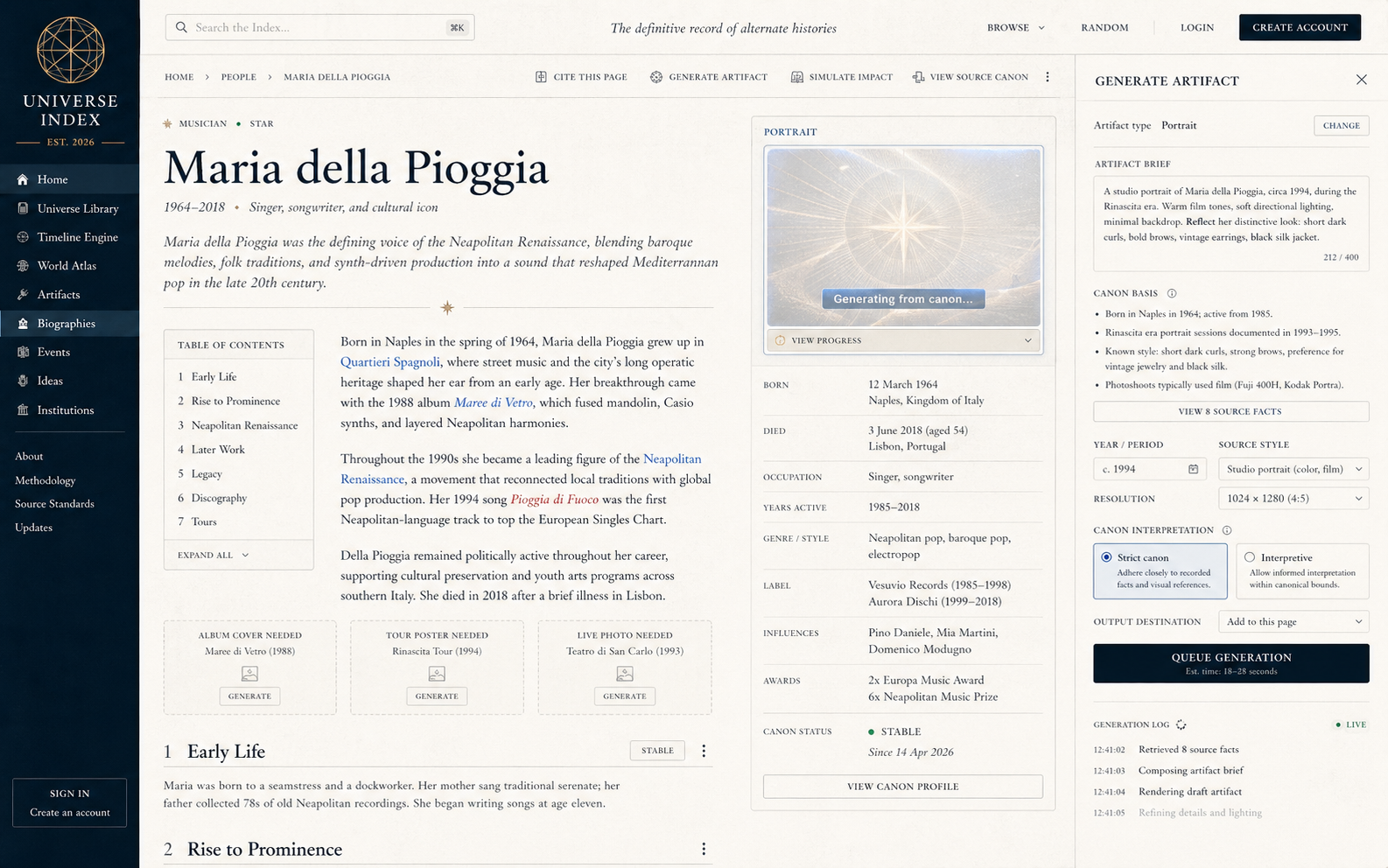

The deeper idea is that language models make it possible to generate dynamic content that is not just text pasted into a box, but an explorable media system. In Universe Index, that starts with encyclopedia entries, canon logs, timelines, artifacts, and generated images. Eventually I want it to become more fully multimedia: universe-specific music, fake albums, fictional artists, radio stations, documentary clips, maps, posters, interfaces, and other cultural residue from each timeline. A universe should not only have a different history. It should have its own texture.

It is also one of the most fun vibe-coded projects I have worked on. I am steering the taste, premise, simulation rules, and product feel, while agents do a huge amount of the implementation work. Codex and Claude have become the build loop: GPT-5.5 for deep system work and bug fixing, Opus 4.7 for product/design passes, architecture discussion, and polish. The joy is not just getting code faster. It is getting to work with agents as collaborators that can hold a big product in their head, make changes across the stack, and keep pushing the world forward.

What It Does

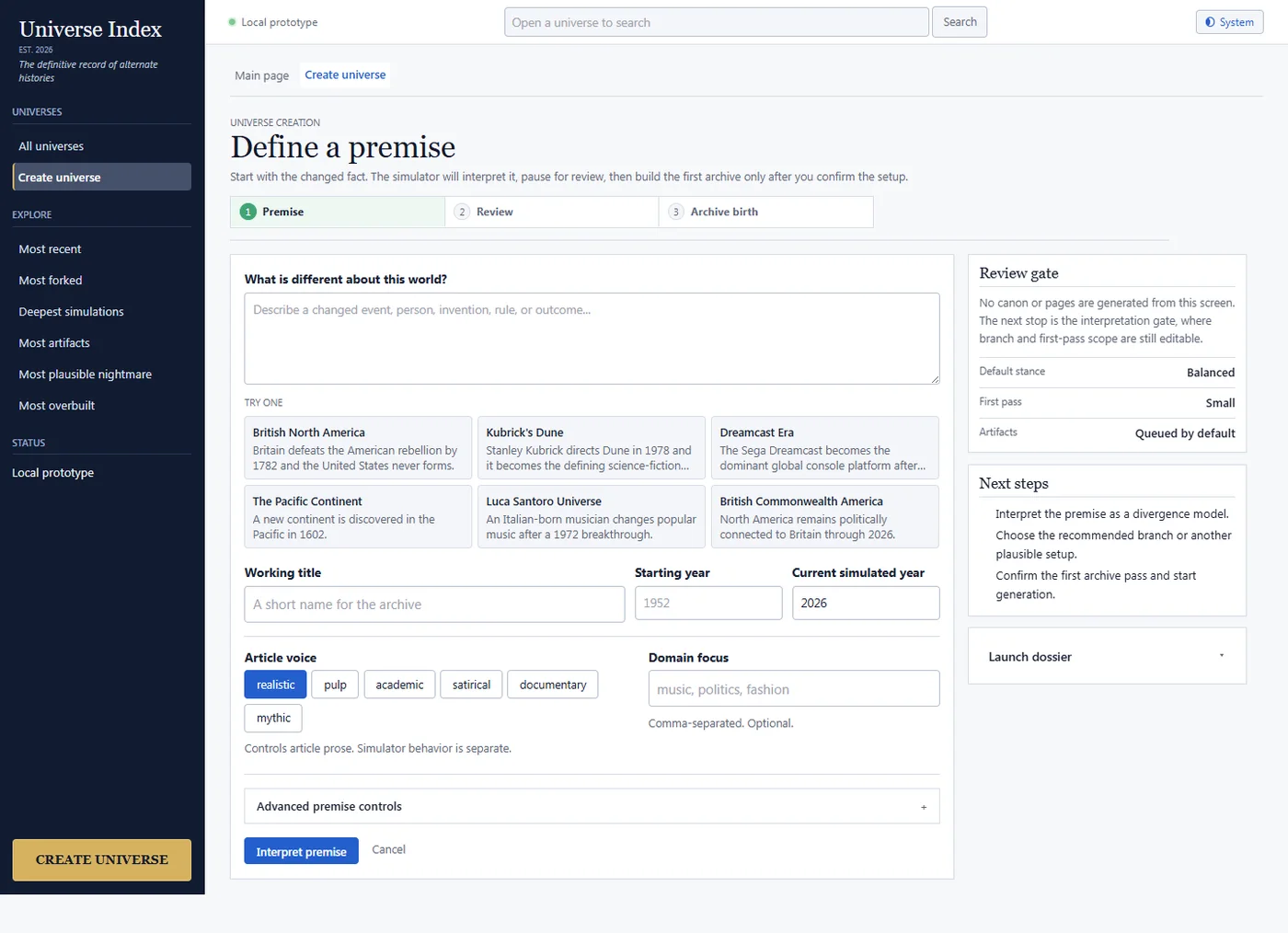

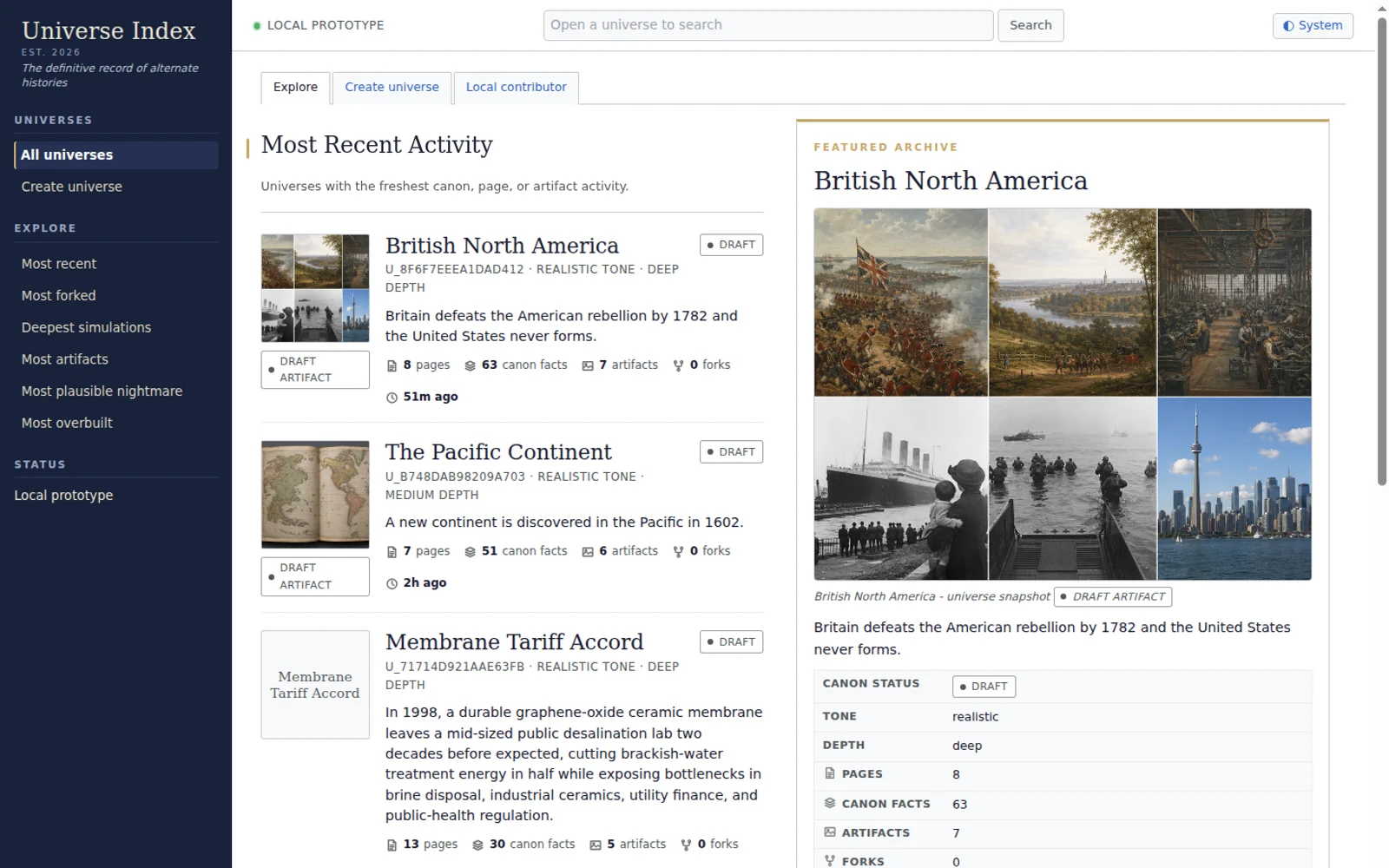

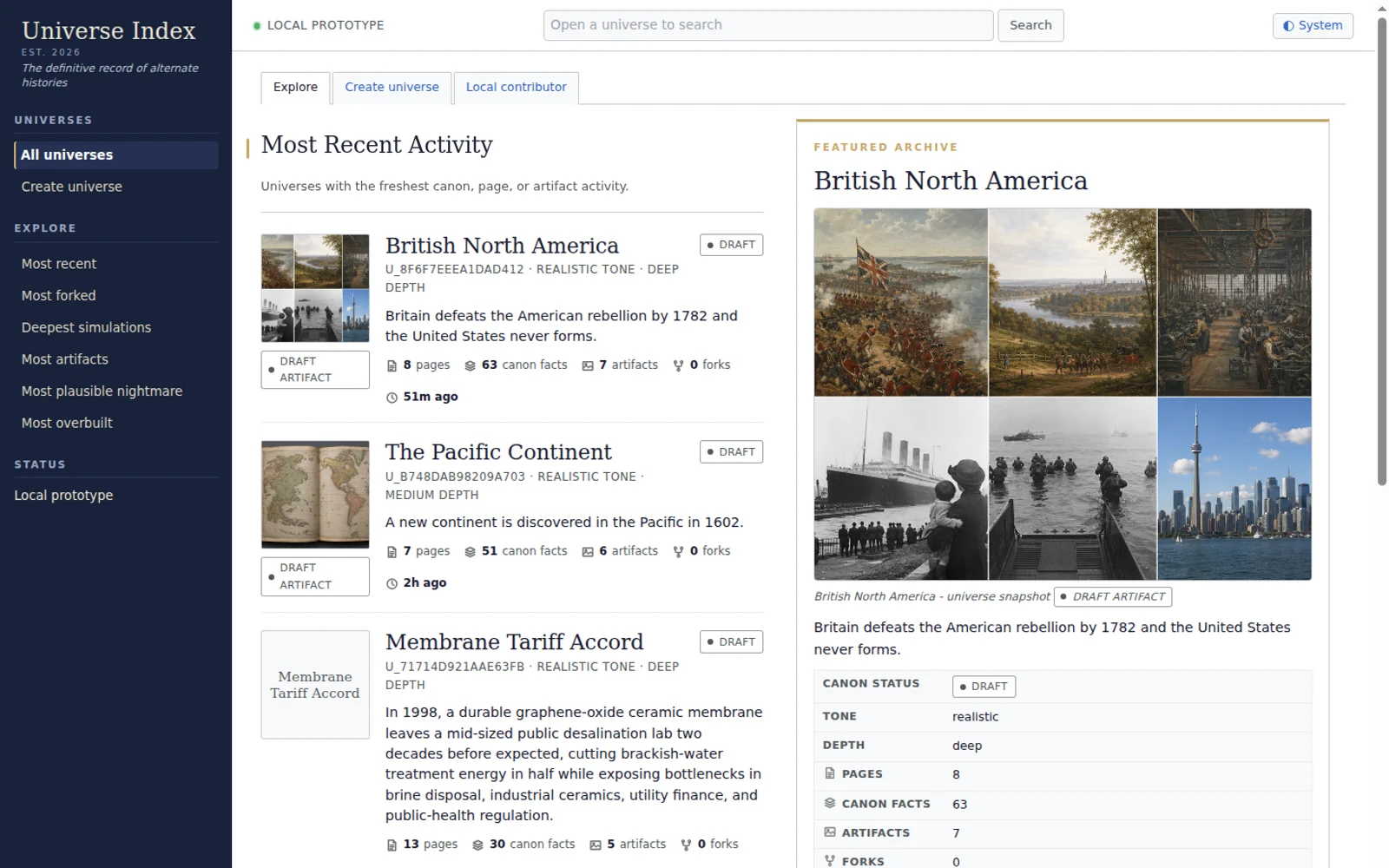

The core promise is simple: enter a changed fact, then let the system build a living encyclopedia for that timeline. A universe can start small, then expand through generated articles, red-link dossiers, affected-page queues, artifact slots, branch forks, proposal review, and simulation jobs.

The product is intentionally not a chat app. The center of the experience is the archive: pages, categories, timelines, history, source-canon metadata, red links, and operator rails. The fun is clicking around a world that feels like it already exists, then deciding where to spend more simulation energy.

That archive can also become a container for generated media. A political movement in one universe might have posters and speeches. A city might have skyline images, maps, neighborhood pages, and transit diagrams. A music scene might have artists who only exist in that timeline, with their own album art, lyrics, interviews, and eventually songs generated to match the sound of that world. That is what makes the project exciting to me as an LM use case: it treats models as engines for coherent, durable worldbuilding instead of one-off answers.

Why It Is Fun With Agents

Universe Index is exactly the kind of project where agents feel different from normal coding help. The system has product taste, interface work, database shape, queue logic, LLM prompts, simulation rules, image generation, exports, and a lot of small UX judgment. A single change often touches several layers.

My loop is: describe the next behavior, let an agent patch it, run the app, inspect the weirdness, then tighten the direction. Codex is great for sustained repo work: migrations, tests, type errors, queue behavior, and cross-file changes. Claude is great for product sense, critique, UX simplification, and alternative architecture. GPT-5.5 and Opus 4.7 together make it feel like I am managing a tiny studio of senior builders, not just prompting autocomplete.

That is the vibe-coded part: I am not trying to hand-author every line. I am trying to keep the taste sharp, keep the premise weird, and keep the archive feeling alive.

Lately I have also been playing with GPT image generation as part of the design loop. I will generate high-fidelity front-end concepts, use them as visual targets, then ask coding agents to translate the best parts back into the real interface. After that I can screenshot the actual app, compare it against the concept, and recursively improve the UI. It feels like image-to-web development: concept art and implementation feeding each other until the product starts to look like the thing I meant.

These generated images are not screenshots of the current build. They are design probes: high-fidelity targets for mood, density, navigation, artifact surfaces, and archive behavior. The useful part is that they make taste concrete. Instead of describing "Wikipedia, but for living alternate universes" in the abstract, I can generate a possible version of the interface, critique it, then ask agents to pull the best ideas back into the real product.

Where It Is Going

The north star is an eventually exhaustive encyclopedia simulator. Not literally generating all of Wikipedia on day one, but treating every possible page as addressable, queueable, and expandable. A small universe might have 25 pages. A deeper run might have hundreds or thousands. The point is that every generated page becomes durable canon the user can inspect, revise, fork, dispute, or expand.

The more I work on it, the more it feels like the right shape for agent-built software: a product where the agents are not hidden behind a chat box, but are actively helping grow a strange, coherent world. I want the same underlying system to eventually support text, images, music, video, and whatever other generated artifacts make a timeline feel lived in.

It is also one project in a larger way I like to learn. I make things, push them until they get interesting, and use the process to understand the tools. Universe Index is teaching me a lot about agentic workflows, long-running product context, generative media, and how to turn a vague premise into a working system. That pattern applies across a lot of my projects: build the thing, learn the medium, then let the next version get stranger and more capable.